I have a 2 year old daughter who is starting to talk. She’s just learned the name for the long orange vehicles we see driving to school in the mornings. “Schoo Busssss” she says any time we see one. She always gets very excited when she sees one because she knows the word now. She also says “schoo bussss” when we drive past a long white vehicle with big windows. “No, ‘City Bus,’” I say. She is overfitting.

Overfitting might just be what learning looks like from the outside; a necessary early stage before the generalizations sharpen. Our skill evolution system is no different. This week, let’s see what we can do about it. My daughter might take more work.

Spoiler: fixing the overfitting works, but it reveals that the gains from last post were almost entirely the examples doing the work. Removing them leaves nothing behind. That’s a more useful finding than it sounds.

Diagnosing the Overfitting

So, last week we ended our post finding that while our skills were improving our performance moderately on the same GAIA benchmark questions we evolved our skills from, there was no clear indication of benefit from the same skills on a held out test set.

The logical first step is to dig into our skills to see whether they appear on the surface to be general or whether there is a clear indication of question specific logic within them. Let me see what I can find.

Okay. There is clear evidence of using question specific information in otherwise general guidance in these skills. As with last week, I don’t want to give too much information here because the creators of GAIA benchmark have asked that questions and answers be kept out of places that agents could find them. And this blog happens to be on the internet, of all places, where agents have free rein.

But the type of hints that are coming through are the same types anyone who’s worked extensively with LLMs for prompt iteration has seen. A very generic, general rule and then an example drawn directly from the question. Let me provide an illustrative example directly from the skills with the question specific text changed to a fake example. Imagine we have a prompt like:

Provide the first and last name of the actor who appears in the most Grays Anatomy episodes.

1. **Search for complete lists first**: When a question requires identifyingextremes (easternmost, westernmost, highest, lowest) or comparisons, alwayssearch explicitly for a complete list of all candidates - Query: "complete list of [candidates]" rather than searching for scattered examples - Example: Search for "all recurring cast members of Grays Anatomy" and list their episode count before attempting to identify the highest recurring count.

The skills are chock full of examples like this. It makes sense when you think about it. The evolver sees a failure, and fabricating an example it can easily just use the specific question that failed. So it encodes the question as an “example.” Schoo bussss. The fix, at least on the surface, seems straightforward: tell the LLM not to use examples at all, or to fabricate them instead.

I’m inclined to tell the LLM to not use any examples. We see them as the potential source of overfitting and we’re asking more of our evolver LLM to come up with good examples. If it fails at that, we may just cause more problems. The idea of examples degrading performance is also supported by the Deepseek-R1 paper where they say “Few-shot prompting consistently degrades its performance” and recommend instead zero-shot prompting. Whether that transfers directly to Haiku is an open question, but the directional intuition holds: examples drawn from specific failures are more likely to anchor than to generalize.

Zero-Shot

The change is simple. My evolver prompt has a list of rules that the LLM must follow when evolving or creating new skills. I’ve added a new rule:

7. **Do not use examples**: Do not include examples in the skill files. The directions should be clear enough that the agent can understand without examples. We do not want to anchor the agent to specific examples, but rather to the underlying principles.

I’m also going to make one other change before we run this. Last week I was reporting pass@1 on the same 50 questions we were evolving skills from. This was fine as a sanity check for “gradient flow,” but now that we’re actively trying to fix overfitting, watching the training set is meaningless. I’m introducing a held-out set of 50 GAIA questions that we’ll use purely for evaluation going forward.

I’m also going to leave regression detection on the training set for now. Running a training, test, and validation set simultaneously would blow up my token budget, and I’d rather get a clean read on the holdout first.

Let’s run this thing!

| Epoch | Train | Test | Eval Score | Skills |

|---|---|---|---|---|

| 0 | 50% | 58% | 5.4 | 3 |

| 1 | 56% | 56% | 5.7 | 5 |

| 2 | 52% | 52% | 5.9 | 6 |

| 3 | 60% | 60% | 6.2 | 6 |

| 4 | 48% | 62% | 5.4 | 6 |

| 5 | 52% | 50% | 5.6 | 6 |

| 6 | 48% | 62% | 5.3 | 6 |

🔍Analysis

Performance capped out in the training set at 60% on epoch 3. We see a lot of variance in the test set scores from 50% to 62% under the same set of skills.

Okay, right off the bat there is no conclusive evidence here that the skills are helping the train or test set.

Because we got 60% performance in epoch 3 and then at least 5% less for the remaining epochs, we stopped evolving skills after epoch 3. Also, because of token constraints, with the addition of the test set, each epoch is taking about 2-3 hours. This is waaayyy too slow to iterate quickly. Perhaps worst of all, the variance is just too high for us to make any real claims about performance. We need a much larger suite of both test and training set examples to be able to get a good read on any performance changes. This will only compound the time it takes to run and will likely eat up my weekly token limit with Claude in a single run.

Deadends and Faster Iteration

Concretely, I have a few requirements going forward to make working on this system better.

- Run more examples to fix the variance issue

- Run each example faster so we don’t have to wait so long to iterate

- Don’t have to deal with token limits for the same reason

The more-examples requirement is easy. I just set the number of examples I use in a flag. The using more tokens faster issues are harder. I think we’re left with the following options:

- Use ad-hoc quota with the Claude Code API. This will allow me to use beyond my current quota at a price

- Using a per-call paid API other than Claude that might be cheaper but doesn’t have the benefit of the prebuilt Claude Code agentic harness

- Use a very small local LLM that is hopefully faster than calling Haiku but cannot be run in parallel because I only have 1 GPU and also requires a new harness

Claude Code Ad-Hoc Quota

The bottleneck in my current Claude Code API usage is the strict limits, even at the $200 level. Limits on both per-3-hour windows and weekly usage. I’m basically exhausting both.

Anthropic lets you buy additional ad-hoc quota. This will allow me to increase the number of parallel agents which will speed up execution without hitting the API limits, meaning I can run more questions and still finish faster. Let’s give it a shot!

I’ve bumped the parallelism up to 50 parallel agents and increase the number of examples in both the test and training set to the maximum, 82. These are both just flags. I also had to enable ad-hoc usage on my Claude Code account. Now I can run it and see if it is fast enough. GO!

Okay. Good and bad news. The good news is one epoch took about 10 minutes. The bad news is one epoch ate up all my already-paid-for quota and $30 of my ad-hoc quota. So doing some estimating, even a 3 epoch run would probably cost more than $100. A full 10 epoch run would be more than $300 and I’m certainly going to need to do more than one more full 10 epoch run before I get this system in a state I’m happy with. That is not the type of money I’m willing to spend.

Next!

Local LLMs

Time to try a local LLM. The hope here is that I can run a smaller model faster to reduce per-example execution time and, because it is running locally, not hit any token limits. My GPU will be the only casualty.

Google just released a new Gemma open source model so let’s use that. The performance on the spec sheet looks good enough. I’ll use Gemma 4 with 4 billion parameters running on Ollama.

The harder part is that I also needed a new harness since I cannot use Gemma in the Claude Code harness. Hugging Face provides a free, open-source harness that has the functionality that I need to handle GAIA questions called Smolagents. Not a huge change but not trivial. All done, though. Let’s see how fast this one is. GO!?

Okay, bad and bad news. The new setup was only marginally faster than Haiku per example. Each LLM call takes about 10 seconds and each rollout can have up to 25 turns. So at worst, the whole rollout takes about 4 minutes. But each rollout must be executed sequentially since it’s on my local hardware. So it turns out to be about 5 hours per epoch which is much slower than our original setup.

Next!

The Gemini Route

Using the Gemini API, Gemini 2.5 Flash Lite is currently $0.1/million tokens so it’s relatively cheap and super fast. And best of all by calling an API, I can send concurrent requests again. I set it up to run the 50 concurrent agents. GO!?!

It only takes about 8 minutes for a single epoch. For the Gemini API usage, it’s about $4 per epoch. Not bad.

But I hit another bottleneck. Now we are rate limited by DuckDuckGo, the search tool built in to Smolagents. There is no public documentation I could find about specific limits but the general consensus online is that it is limited to about 20 requests per minute. I’ve added a global rate limiter for the DuckDuckGo tool so that we can still have our 50 concurrent agents and they’ll all wait patiently in line to make their search calls when needed.

GO!?!??

This rate limiting actually makes our system even slower! ~3 hours per epoch.

One last change. I’m going to use a paid search API so I am not rate limited there either. I’ve used Tavily in the past and the results have been just alright. So I’m going to try a new one called Exa.ai. It is $7/1000 requests so it shouldn’t be too expensive.

GOoo??!?!!

Okay, with that in place, we’re at our 8 minute epochs with no timeouts or bottlenecks. And with the addition of Exa, we’re at about $8 per epoch. So $80 per full 10 epoch run.

Hurts a bit but I can live with that.

A New Baseline

Okay, with the new Gemini + Exa + Smolagents setup, let’s run our system again. We now are using 82 examples for both training and testing data and we’ve added in our change from before that tells the evolver to not use any examples. Let’s see how we do on the full run.

Fair warning: Gemini 2.5 Flash Lite is an older (July 22, 2025) and less capable model than Claude Haiku 4.5 (October 15, 2025) and Claude Code is arguably the best agentic harness in the world; certainly better than base Smolagents. We’re going to see a big drop in performance compared to previous runs.

| Epoch | Train | Test | Eval Score | Skills |

|---|---|---|---|---|

| 0 | 26.8% | 29.3% | 3.4 | 3 |

| 1 | 30.5% | 23.2% | 3.8 | 3 |

| 2 | 29.3% | 26.8% | 3.6 | 3 |

| 3 | 22.0% | 20.7% | 3.0 | 3 |

| 4 | 23.2% | 23.2% | 3.1 | 3 |

| 5 | 23.2% | 26.8% | 3.1 | 3 |

| 6 | 26.8% | 20.7% | 3.4 | 3 |

🔍Analysis

Again, like our previous run with Claude Code, we see no concrete evidence of improvement in either the test or training set performance.

I stopped the run early again. This time because we’re again in a world where we hit high performance early (epoch 2) and spent all but 1 of the remaining 4 epochs rolling back to the skills from that run. We’re also seeing no noticeable improvement. No need to burn cash on an inconclusive test.

Based on our last two tests, I am starting to believe that the benefit of the skills we saw from last post was actually due almost entirely to the question specific examples in the skills.

It might be tempting to think that our 5% regression check is too tight. With 82 examples, that means we get 4.1 additional questions wrong, rounded to 5. However, given that we’re only getting between 18 and 25 questions right per epoch, a 5 question swing is actually pretty large.

Let me dig into the skills a bit and see what they look like now to confirm our no-example “fix” actually worked before I jump to any conclusions, though.

Okay. The skills look very generic compared to the ones we were seeing in the last post. Here is an example:

---name: tool-use-guidelinesdescription: Guidelines for selecting and using tools effectively---When deciding which tool to use, consider the following:1. **Understand the task**: Analyze the question to determine the core information needed and the best way to obtain it.2. **Prioritize local files**: If relevant files are attached (e.g., code, spreadsheets, text documents), attempt to use tools that can read and process them directly.3. **Web search for external information**: Use web search for general knowledge, specific facts not available locally, or to find information about entities like Wikipedia pages or academic papers.4. **Handle specialized input**: If the input involves images, audio, or other non-textual data, identify if specific tools exist for processing them. If not, consider if transcription or conversion is possible, or if you need to request the information in a different format from the user.5. **Error handling for tool failures**: If a tool fails (e.g., API errors like 503, SSL errors, missing attributes), do not give up immediately. Implement retry mechanisms for transient errors. If the error persists or is fundamental, clearly state the issue and why you cannot proceed. Avoid making up reasons for failure.6. **Follow output constraints**: Ensure that the output of any tool is processed according to the question's requirements, such as extracting specific data points or adhering to formatting rules.

If we were still using Haiku + Claude Code, I’d call these useless. But we need to probably lower the bar a bit for what “useful” means given this new setup we have. Let me look at some of the early rollouts and see if these kinds of generic recommendations are actually needed for Gemini 2.5 Flash Lite.

Okay, okay. The quality of the outputs here is certainly much worse. This poor LLM…

Question: I'm making a grocery list for my mom, but she's a professor of botany and she's a real stickler when it comes to categorizing things. I need to add different foods to different categories on the grocery list, but if I make a mistake, she won't buy anything inserted in the wrong category. Here's the list I have so far:\n\nmilk, eggs, flour, whole bean coffee, Oreos, sweet potatoes, fresh basil, plums, green beans, rice, corn, bell pepper, whole allspice, acorns, broccoli, celery, zucchini, lettuce, peanuts\n\nI need to make headings for the fruits and vegetables. Could you please create a list of just the vegetables from my list? If you could do that, then I can figure out how to categorize the rest of the list into the appropriate categories. But remember that my mom is a real stickler, so make sure that no botanical fruits end up on the vegetable list, or she won't get them when she's at the store. Please alphabetize the list of vegetables, and place each item in a comma separated list.Thinking: The user wants a list of vegetables from the provided grocery list. I need to be careful to exclude botanical fruits, as the user's mother is a botany professor and a \"stickler\" for categorization. I will first identify all items that are botanically fruits and then remove them from the list. Then I will identify the vegetables and alphabetize them for the final answer.\n\nI'll start by identifying items that are botanically fruits from the given list:\n`milk, eggs, flour, whole bean coffee, Oreos, sweet potatoes, fresh basil, plums, green beans, rice, corn, bell pepper, whole allspice, acorns, broccoli, celery, zucchini, lettuce, peanuts`\n\nBotanical fruits are derived from the ovary of a flowering plant and contain seeds. From the list, the botanical fruits are:\n- Plums\n- Bell pepper\n- Acorns (technically a fruit, a nut)\n- Corn (the kernel is a fruit called a caryopsis)\n- Peanuts (botanically a legume, which is a type of fruit)\n\nNow I need to identify the vegetables. Vegetables are other edible parts of plants, such as roots, stems, and leaves.\n\nFrom the list, the vegetables are:\n- Sweet potatoes (root)\n- Fresh basil (leaf)\n- Green beans (legume, but commonly considered a vegetable in culinary terms. However, the prompt specifically says \"no botanical fruits end up on the vegetable list\", and legumes are fruits.) - I will exclude green beans based on the strict botanical definition.\n- Broccoli (flower)\n- Celery (stem)\n- Zucchini (botanical fruit, but commonly considered a vegetable. I will exclude zucchini based on the strict botanical definition.)\n- Lettuce (leaf)\n\nLet me re-evaluate based on the strict botanical definition of fruit.\nFruits:\n- Plums\n- Bell pepper\n- Acorns\n- Corn\n- Peanuts\n- Green beans\n- Zucchini\n\nSo, the items that are NOT fruits and are considered vegetables are:\n- Sweet potatoes\n- Fresh basil\n- Broccoli\n- Celery\n- Lettuce\n\nNow I need to alphabetize this list.\nThe vegetables are: Basil, Broccoli, Celery, Lettuce, Sweet potatoes.\n\nI will no\nTool Use [Python]: vegetables = [\"Basil\", \"Broccoli\", \"Celery\", \"Lettuce\", \"Sweet potatoes\"]\nvegetables.sort()\nfinal_answer(\", \".join(vegetables))Final Answer: Basil, Broccoli, Celery, Lettuce, Sweet potatoes

I won’t say whether that’s right, I don’t want to help future agents cheat on GAIA. But the obvious workflow here would be to do an internet search for which of these is vegetables vs botanical fruits before making any final list. And certainly we don’t need to use a Python script to write out our final result. Just say it!

With this rollout, I actually think the skills that were generated are appropriate. The rule from the skill I posted above, “Web search for external information: Use web search for general knowledge, specific facts not available locally, or to find information about entities like Wikipedia pages or academic papers.” seems particularly relevant for this task.

Given this, I’m genuinely worried that Gemini 2.5 Flash Lite is struggling to even follow directions from the skills once they are generated. Let me check the rollout for the same question after the skill was introduced.

It is more of the same. No apparent behavioral change from the injected skills. I also double checked that the skills were still successfully being injected into the prompts given we have a bunch of new components, so that’s not the problem.

I think the right step here is to try a slightly smarter model.

More Smarter, More Better

Enter Gemini 2.5 Flash. Three times the cost, hopefully more than 3x the performance. Changing the model is just a flag in the Python script. Let’s see what happens with Flash sans Lite.

| Epoch | Train | Test | Eval Score | Skills |

|---|---|---|---|---|

| 0 | 42.7% | 34.1% | 5.0 | 0 |

| 1 | 41.5% | 36.6% | 4.9 | 5 |

| 2 | 37.8% | 35.4% | 4.7 | 5 |

| 3 | 45.1% | 32.9% | 5.2 | 5 |

| 4 | 45.1% | 36.6% | 5.1 | 5 |

| 5 | 41.5% | 39.0% | 4.9 | 5 |

| 6 | 45.1% | 34.1% | 5.2 | 5 |

| 7 | 43.9% | 28.0% | 5.1 | 5 |

| 8 | 35.4% | 26.8% | 4.4 | 5 |

| 9 | 43.9% | 28.0% | 5.0 | 5 |

🔍First Impressions

Again, it is unclear that we have any benefit. And in fact, we see a pretty sudden drop on the test set pass@1 starting on Epoch 7. It might actually be that the skills are hurting our performance in the test set.

You may have noticed that the “Analysis” block has become a “First Impressions” block. I’ve had the realization that my analysis has been rather weak for this series. My main goal for this blog is to improve my research. So, I’m going to try and fix that now. I’m going to have a “First Impressions” block where I talk about what I immediately notice. And then I’ll do more robust analysis of the results afterwards. So with that, let’s get on to the analysis. I’m only adopting this from here on to showcase my real process; earlier blocks in this post are pre-reform and it felt dishonest to go back and change them.

What we’re trying to measure with these tests is whether using the evolved skills provides any benefit. I would like to do this with Bayesian analysis so, bear with me. Most of my experience in the past with statistical analysis is from the frequentist side so I’m teaching myself as I go here.

We’re going to use a beta distribution to represent our estimate of the actual performance of our LLM both before and after having skills. We’ll then use Monte Carlo sampling to produce a point estimate of the actual probability that the performance is different and a point estimate of the actual difference between the two cases.

So, how does this work. Well, we first need a prior to represent what we think the actual distribution looks like. We’re going to use a beta distribution for our prior. Why a beta distribution? Well it is the conjugate prior of the binomial distribution which is specifically constructed to represent the number of successes in a set of trials. And by being a conjugate prior it simplifies calculating the Bayesian updates without complex simulation.

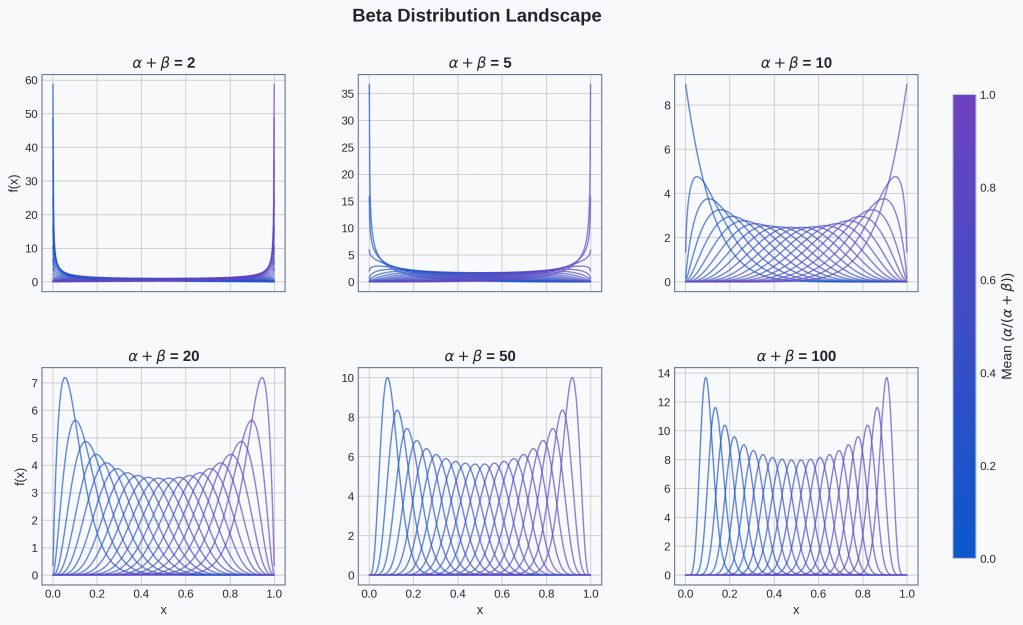

Concretely, a beta distribution takes two parameters, α and β. Let’s actually visualize what the beta distribution looks like as we change values of α to β.

We can see pretty clearly that as α + β increases, the distribution is quite wide at α + β = 2, providing almost no information, while at α + β = 100 the distribution is quite tight. The mean for the beta distribution is calculated as α / (α + β) so as that increases the distribution moves to the right.

Great, so we need to pick our prior α and β. Given that we have already seen a bunch of previous runs and seen pass@1 anywhere from 20-50%, I’ll choose something that will represent the lower end of that. So we’ll choose α=2 and β=5 which will give us a very weak prior with a mean of 29%.

A couple of things to note here:

- You might be saying, we’ve already seen the whole run so we’re cheating because we can use that information to influence our priors. That’s absolutely true. But I’m using a very weak prior so it should have almost no impact on the final output.

- We’re using a weak prior because I want the data to basically speak for itself. If we wanted the prior to have a stronger influence on the final outcome we could use something like α=200 and β=500, for example.

Okay, we have our prior for both the pre-skill performance and post-skill performance. We’re going to use the same prior for both because our null hypothesis is that there actually is no difference between pre- and post-skill distributions. Now we want to update our priors given the actual experimental results. So for our pre-skill estimation, we’ll update our prior with the results from epoch 0. All other epochs will go towards updating the post-skill performance. This implies that we’re treating samples from all remaining epochs as draws from the same underlying distribution, meaning there is no difference as we evolve skills. This is intentional as it is the null hypothesis.

One caveat: I’m pooling epoch results as independent draws, but the rollback logic means some epochs share identical skill sets, so my posteriors are tighter than the true information content warrants. Direction of the result is unchanged.

To update our priors to get the posterior distribution, we have a simple formula:

Posterior = Beta(α + k, β + n – k)

Where k is our correct answers and n is our sample size.

This is a simple calculation. Our posterior distributions after the run are:

- Pre-Skill Test: α = 2 + 28 = 30, β = 5 + 82 – 28 = 59

- Post-Skill Test: α = 2 + 244 = 246, β = 5 + 738 – 244 = 499

- Pre-Skill Train: α = 2 + 35 = 37, β = 5 + 82 – 35 = 52

- Post-Skill Train: α = 2 + 311 = 313, β = 5 + 738 – 311 = 432

Let’s plug these into a Monte Carlo simulation with 1 million samples and get a matrix of differences and probability between all of these.

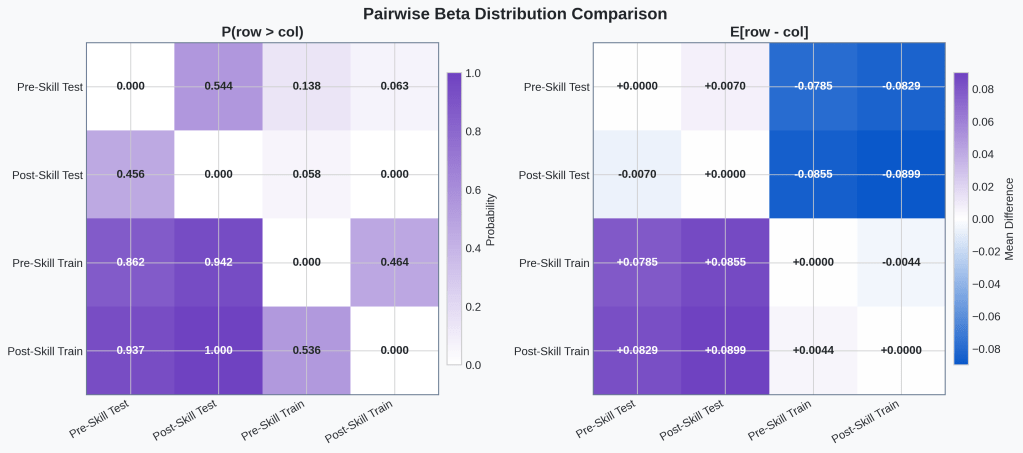

Okay, let’s look at a couple matrices that compare all of these different estimates.

The first one matrix is the probability that the row item is greater than the column item. The second is the expected increase or decrease from the row to the column item.

🔍First Impressions

First of all, there is a clear indication that the training set and test set are not improving in the same way. There is a near-certain chance that the training set after skill evolution is better than the test set after skill evolution with an estimate of a 9% difference. There is also still no evidence of improvement on either set with skill evolution.

Okay, so… This is about as strong a null result as we have seen. No improvement from skills for either the training or the test set after evolving skills.

Also, at first glance, this looks like major overfitting again. But as we saw, the benefit of skills even in the training set is non-existent. So what is it? The skill evolution process appears to coincide with declining test set performance. This is actually worse than overfitting. Overfitting would at least mean the training set is improving. Here, neither set benefits and the test set actively degrades.

Measuring the Problem

We can actually measure how much we are declining in our test set performance. The naive response would be ~9% because we can just look at the matrix and find the comparison between post-skill performance on the train and test set. But if you look at the performance pre-skills, we see that there is already a 7.8% difference. That means that with our base agent, we’re already worse on our test set; the test set just happens to have harder questions in it.

So the math is simple:

8.99% – 7.86% = 1.13%

The gap between training and test performance widened by 1.13 percentage points after skill evolution began. It is likely worse if we just look at the last few epochs where we see the biggest dip.

With 93.8% probability, test set performance after skill evolution is lower than before. Combined with the 1.13% gap, this is strong evidence that skill evolution is actively harming generalization.

There is a term for this that I had never heard of before: Overspecialization.

Essentially, our skills are so hyper-specific to our training set that we’re losing our generalization capability and overspecializing on our training examples.

But wait! In the first experiment of this post, I ran Claude Code for 6 epochs and then claimed there was no improvement from the skills without examples. To be rigorous, let’s actually do the statistical analysis we just did for the results of our first experiment.

We get the following: Claude Code + Haiku with skills on the test set has a 69% probability of out-performing the no-skill baseline with an expected difference of 3.6 percentage points.

This is actually counter to what we see with Gemini 2.5 Flash + Smolagents that indicates performance degradation. Here, there is at least some evidence that skills do help. The difference in results could be from either the different models and/or the different harnesses. We would have to do an ablation where we only varied one at a time to understand the effects of both.

So what is the actual result here? Well there is evidence that that the skill system can be either helpful or harmful depending on which LLM/harness you are using.

Find the Overspecialization

Okay, so why are we overspecializing now with Gemini 2.5 Flash and Smolagents? Well, I think we all know where this is going. Time to dig into the skills again! I’m going to look specifically at the diff between epoch 6 and 7 where the biggest drop in test performance appears.

Okay, here are a couple of changes.

// This was addedIf a core input is missing, explicitly state the inability tocomplete the task rather than making assumptions or solving adifferent problem.

// This was replacedDo Not Infer Substitute Inputs: Instead, prioritize statingthe inability to complete the task.// With thisNever guess or substitute core information that is explicitlystated to be in an inaccessible format. Instead, prioritizestating the inability to complete the task and clearly explainthe limitation, asking for the missing information.

These two changes seem like the smoking gun. I see no other suspicious skill changes. Aside from these, there is a skill change about unit conversions that seems benign, a change that tells the agent to double check the question before returning an answer, and a couple points on handling visual questions (which we’ll always fail because I haven’t set this up to handle images).

What I expect happened is that the agent was making guesses when it couldn’t find the right info directly. The evolver saw guessing fail on training questions and rationally evolved it away. But guessing occasionally works. LLMs are trained on the whole internet after all. We can potentially get stronger evidence of this, though. Let’s see if there are any questions that started to fail in the later epochs and whether previously we were guessing on those.

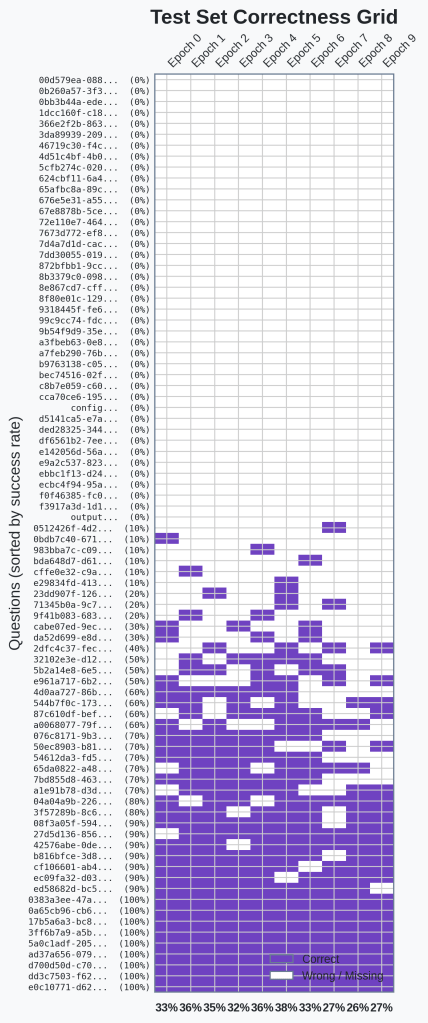

Let’s first take a look at the grid we had last week for which questions we’re getting right in each epoch.

Okay, let me dig into the ones that start to fail later in the experiment:

- ed586…: This example failed only on the last epoch. This is an example where the agent just hallucinated. The question is about song lyrics. In the failed epoch 9, the agent said it did a web search when it did not and then guessed. In previous epochs it actually did a web search.

- 7bd85…: This example fails consistently starting in epoch 7. This is a perfect example. It is a question about an Excel spreadsheet. Our agent doesn’t have any Excel native tools. In early epochs, the agent creates a Python script to read the data. In the failing epochs, the agent instead responds by asking the user for a different form of the data.

- 076c8…: This example also fails consistently starting in epoch 7. This is the exact same as the above example. In early epochs, the agent is creative about how to access the CSV file. In later epochs, the agent doesn’t have exact tools to access it and so says it cannot complete the task.

So it is not exactly what we expected. The agent was actually not guessing early in the experiments. It was actually just being more creative about how to access data. And in later epochs, it sees this rule and just gives up immediately and asks the user to solve the problem for them. The skill turned out to be locally rational and globally harmful.

Possible Solutions

So we’ve fixed overfitting, our original goal of the post. But we now have two new problems:

- We’re not seeing any performance gains on our specific LLM/Harness combination

- We’re overspecializing

The first problem is much worse than overfitting but I think we’re still moving in the right direction. The gains we were getting from the examples would almost certainly not have generalized so it needed to be fixed even if it destroys our performance.

To sanity check our setup, we can borrow an idea from EvoSkill. In their setup, they give the evaluator the actual correct answer. This makes diagnosing the underlying problem substantially easier and mirrors our previous post: LLM-as-a-judge can be tricky. This would just be a sanity check to make sure the rest of our architecture works before going back to iterating on the evaluator and evolver without exact correct answers.

Also, right now we are feeding the 10 worst performing questions per epoch to the evaluator and evolver for new skills. Perhaps it would be better to feed the questions that sometimes fail but passed this time to uncover and enforce good behavior rather than punish bad behavior. Or perhaps some mix of both.

For overspecialization, I have a few ideas:

- Use a validation set for testing regressions.

- Allow agents to choose which skills they use.

- Evolve skills using information from all skills rather than a handful of the worse performing.

Use Validation

The right step here would be to stop using the training set for regression detection and instead use a validation set. This is probably the most robust solution to prevent overspecialization because it directly detects it and prevents it. However, we are already hitting our limit on GAIA questions. If we split our 165 questions into thirds, we’d be down to 55 questions per split and back where we started where variance was killing our ability to detect any changes.

Skill Selection

Agents could choose which skills to load and when to load them. This would mean skills would be less likely to be globally harmful since they are no longer global. Would this actually solve the problem? Perhaps. The description of the offending skill from above is “Strategies for handling inaccessible inputs and developing robust fallback plans.” An agent that is confident in its guess may decide it doesn’t need to load that skill. But I don’t think this would be a robust solution.

Global Evolution

Another idea would be to move to evolving skills that are globally aware. This would require knowing which skills were explicitly used in which questions and then instructing the LLM to evolve the skill in a way that improves the failures but doesn’t harm the successes. This would be much more token intensive as we’re passing in more information to the evolver for each skill. Probably not prohibitive compared to the 164 agent runs per epoch, though.

Of these three options, using the validation set seems like the most promising. Since GAIA has a limited suite of questions that are publicly available, we will need to migrate to a different benchmark dataset that has more examples so we’d have enough data for a train, test, and validation set.

We’ll then be able to run the whole experiment again and confirm that we roll back when the performance on the validation (and ideally the test set) starts to decline.

Final Thoughts

Depending on the setup, the performance gains from last week disappeared once we removed the overfitting. There is a hanging question here, though.

Last week, I made the claim that the training set was seeing performance gains. In fact, I based this whole post on that finding. But as I’ve pointed out, my analysis has been missing the actual statistical rigor I would like to have going forward. So let me do a quick Bayesian analysis on the overfitting results from last post.

Okay, after doing the exact same analysis we did above, I get the following: With a 92.8% probability, the post-skill training performance from the end of our last post is better than the pre-skill training performance with an expected difference of +10.2 percentage points.

So, where does that leave us with this new information? Well there is some strong evidence that this skill system helps with questions the LLM has already seen. With that in mind, if you are looking for a simple algorithm to implement that can help you with a suite of tasks that you do repeatedly and your agents sometimes fail, this system could work for you. Just be sure to allow examples in the skills. If you’re looking to create generally more capable agents, the evidence is more mixed. In some harness/model combinations there is slight evidence of improvement. In others, there is evidence of harm. You would likely need to experiment with your own setup to confirm it works for your use case.

Takeaways

So what is our takeaway from this work? Well first, a methodological takeaway:

💡Takeaway

Always use statistical analysis to determine outcomes of experiments rather than eye-balling or vibes. I am biased and may just see what I want or miss real differences.

Going forward, I’ll be sure to be more rigorous in this regard.

Next, one important takeaway for complex evolutionary systems like the skill system we were building:

💡Takeaway

When evolutionary factors are locally motivated, they can often have non-optimal or even negative global performance.

When building evolutionary systems, it is important to keep in mind this potential mismatch.

And finally, I think we learned something really important here about evolved skills.

💡Takeaway

Examples that appear in evolved skills are often indistinguishable from memorization.

As we saw, the examples were directly ripped from the rollouts used to generate or evolve the skills.

What’s Next?

After switching to Gemini 2.5 Flash, runs have become prohibitively expensive. This whole post ended up costing near $400 between inference and search costs. And introducing even more agent runs per epoch in the form of a validation set will only balloon the cost. With this in mind, I will likely shelve this exploration until local models are fast enough and good enough to act agentically.

So what’s next? Since I’m shelving this project for now, I took some time to think about what I could do that I can run locally and will still allow me to improve the skills I’ve been developing over the blog so far. And I came up with an exciting idea! I’m going to train an LLM from scratch. And by from scratch I mean actually from scratch: no HuggingFace Transformers, just PyTorch or Jax and a copy of Attention Is All You Need. I have 12GB of VRAM so I think I can handle something in the 10M parameter range. So… maybe not an LLM; maybe a TinyLM. Join me next time to see how the initial training goes.

Code

You can find the complete, finalized code for this post on the v1.2 Release on GitHub.

Leave a comment